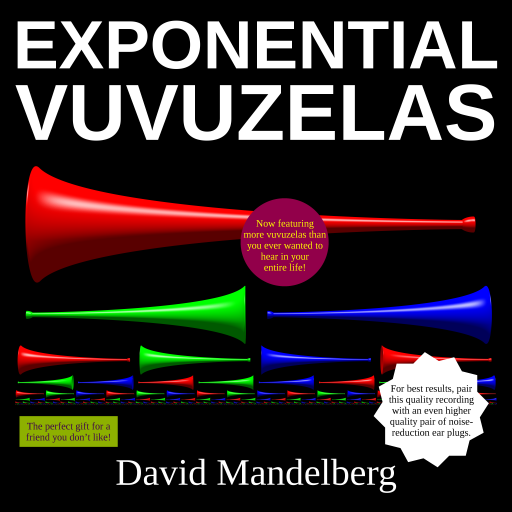

If you’re the type of person who always felt that your music collection just needed a few (hundred) more vuvuzelas, then today, you are in luck! Presenting a complete recording of Exponential Vuvuzelas, available for audio download and music video streaming today!

- Exponential Vuvuzelas: Act 1, Crescendo: N. 1 Vuvuzela

- Exponential Vuvuzelas: Act 1, Crescendo: I. 2 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: II. 4 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: III. 8 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: IV. 16 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: V. 32 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: VI. 64 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: VII. 128 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: VIII. 256 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: IX. 512 Vuvuzelas

- Exponential Vuvuzelas: Act 1, Crescendo: X. 1024 Vuvuzelas

- Exponential Vuvuzelas: Act 2, Diminuendo: I. 1024–0 Vuvuzelas: “Outro”

- Exponential Vuvuzelas: Act 2, Diminuendo: N. 0 Vuvuzelas: “A much needed break for your ears”

- Bonus! All 37 Samples From Exponential Vuvuzelas, for Your Listening Agony

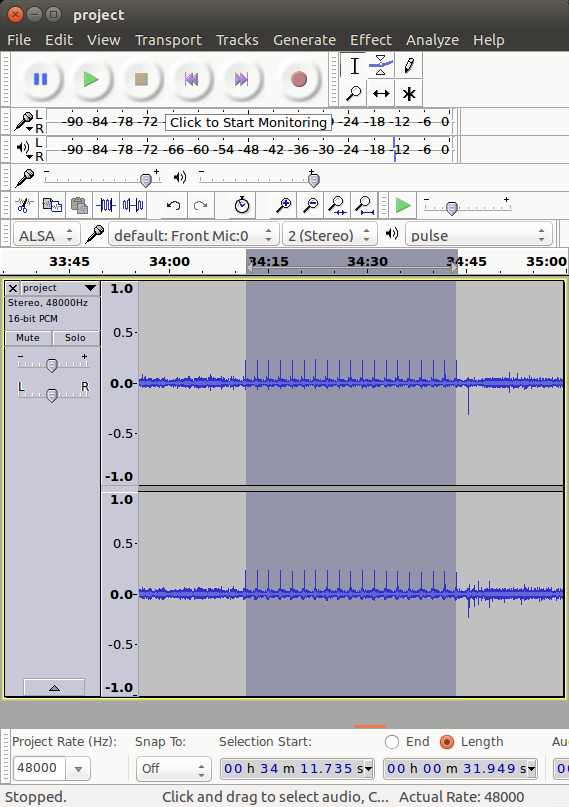

In addition to the music and videos, there’s also a score of the composition, the code used to turn 37 vuvuzela samples into 1024 simultaneous vuvuzelas, and the code used to generate the visual part of the music videos.